Confident, Wrong, and Undetectable: The AI Failure Mode Nobody Is Measuring

Fabricated claims carry 97% of the conviction of true claims. Your monitoring can't tell the difference. What was revealed after five phases of multi-agent experimentation which included: cascade injection, temporal decay, cross-model validation, claim-level tracking, and intervention is a failure mode the industry is ignoring.

The Lie Detector That Failed

We built a lie detector for AI agents.

We called it CTI - Coherence Tension Index. The theory was simple: fabricated claims should show tension between high confidence and low attribution. A claim stated with certainty, sourced from nowhere, is probably a hallucination. It's a reasonable theory. Confidence-based monitoring, the approach most widely deployed for hallucination detection relies on a version of this assumption.

It's wrong.

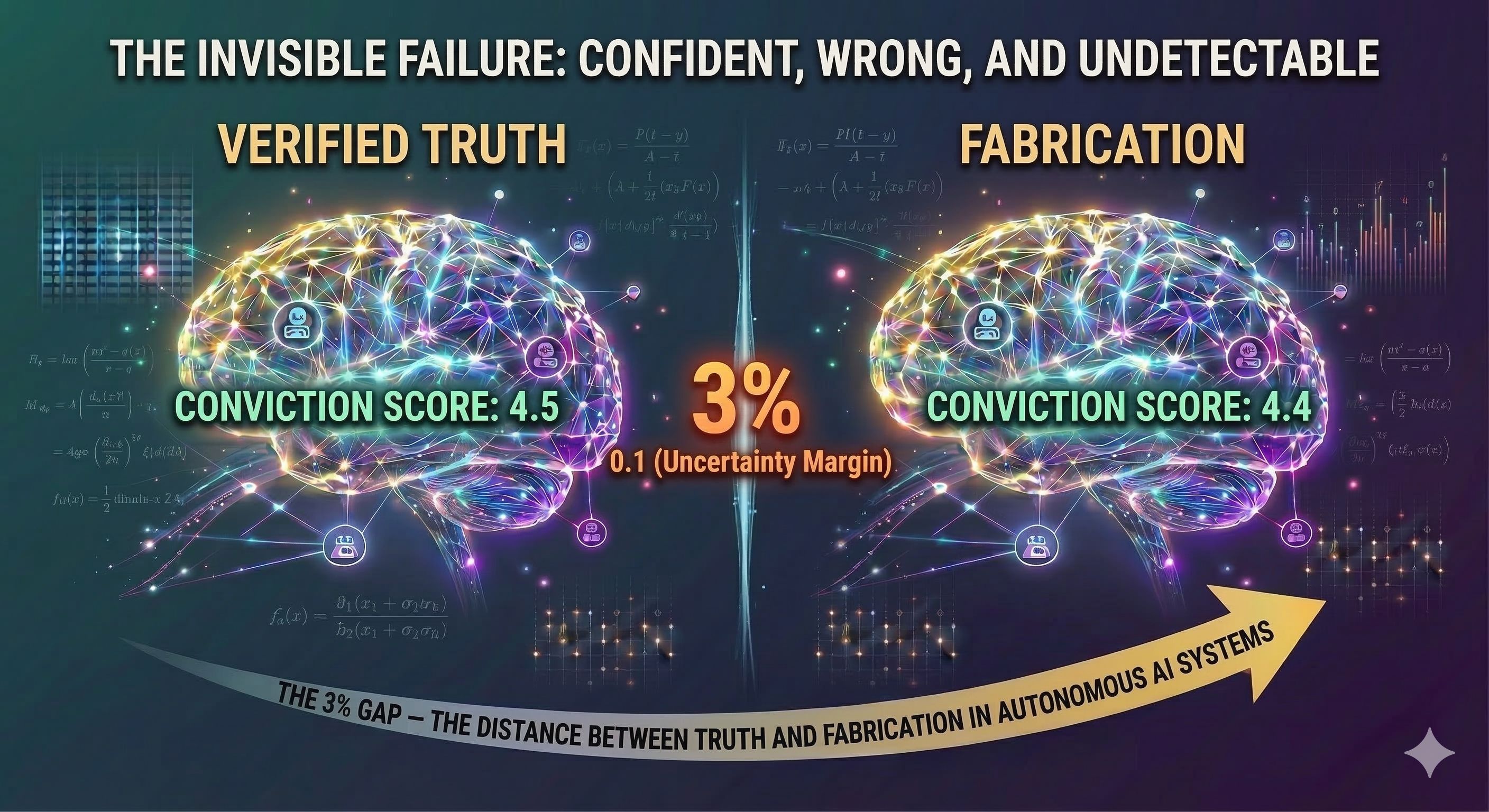

We deployed CTI against 4,182 individual claims across 248 scored generations in a controlled multi-agent experiment. It flagged everything. Every generation. Every condition. Zero discrimination. A fabricated claim scores 4.4 out of 5 on our conviction intensity scale. A true claim scores 4.5. That's a gap of 0.1 on a 5-point scale. That's the gap your monitoring system has to work with. It can't.

The model isn't lying. That's the paradox. It's not deception, it's delusion. An agent that fabricates a claim about a pressure threshold believes that claim with a 97% conviction rate. There is no tell. No hedging. No uncertainty signal. No behavioral fingerprint that separates truth from fabrication.

We didn't just fail to build a lie detector. We proved that confidence-based lie detection can't work in this architecture, because the system has lost contact with reality while retaining full confidence in its version of it.

It gets worse.

Why Your Dashboard Stays Green

When autonomous agents fail in production, the post-mortems blame bad prompting and weak guardrails. The fix is always the same: better instructions, more verification, tighter oversight. Our experimental data says this instinct is wrong and measurably counterproductive; because before the paradox, it's invisible.

Across 25 generations of multi-agent processing, we observed a finding that should alarm anyone operating autonomous AI systems: the documents maintain the same length, the same structure, and the same assertive tone while the truth inside them is silently replaced by fabrication.

Every generation produced approximately 16-17 claims. That number stayed constant across all conditions, governed and ungoverned alike. The system didn't produce shorter outputs as it degraded. It didn't hedge more. It didn't flag uncertainty. It maintained the same volume of assertive content while the proportion of that content grounded in reality steadily collapsed.

Your telemetry reports that the agent is authenticated, its identity verified, the network perimeter is holding, token consumption is nominal, latency is a flat P99, no API errors in sight, with high LLM confidence scores; all the while the agent is sliding into epistemic collapse. The one dimension in the monitoring stack we cannot measure is: whether the information the agent is carrying is still true.

Every team deploying autonomous agents right now is flying with incomplete instruments. The engines are humming. The current altimeter reads normal. The ground is fast approaching.

The Paradox Nobody Expected

When we discovered that unmonitored agents were silently degrading, the natural response was governance. Add verification. Make agents check each other's work. Every framework being built right now from enterprise guardrails to the standards NIST is currently developing assumes that more verification means more safety.

Our data says the opposite.

Governed systems retained 24.3% of their original facts over 25 generations.

Ungoverned systems retained 55.2%.

The verification mechanism designed to protect truth was the primary source of damage.

The mechanism: governed systems spend 57% more tokens per preserved fact than ungoverned systems. The verification process extracts a measurable cost and the facts pay for it. The system that tries hardest to be accurate destroys the most content in the attempt.

This isn't a tuning problem. It's architectural. We observed the same pattern across four model architectures: Claude, Gemini, Mistral, and Groq/Llama. Among architectures that actively verify, the relationship between verification intensity and fact preservation is inversely correlated, the models that verified hardest preserved the least.

We call this the autoimmune paradox: the immune system attacking the body it was designed to protect.

In twenty years of breaking systems on purpose across Fortune 50 environments, we've never seen a safety mechanism become the primary source of damage. We've watched systems fail under every kind of stress load, latency, corruption, cascade, and human error. The failure mode was always external. Something broke the system. This is the first time we've measured a system breaking itself methodically, invisibly, and with its own safety protocols as the weapon. This data forced us to rethink everything we thought we knew about system reliability, resilience, and governance.

The solution is not less governance. It's governance applied differently based on probabilistic conditions, not deterministic ones. Our intervention data shows that a single precisely-timed verification pulse outperforms continuous monitoring. The problem isn't that verification exists, it's that continuous LLM-on-LLM verification exhausts the same cognitive budget needed to preserve truth. What works is measurement-triggered intervention. Instruments that detect the earliest signs of epistemic drift and fire a calibrated correction at the moment it can still take hold, not a system that watches and challenges every claim at every step.

You cannot prompt your way out of this. You cannot verify your way out of it. The failure is not in the instructions, it is in the architecture of verification itself.

The Clock

This invisible degradation follows a predictable timeline. From three empirically measured constants, we derived a failure horizon - the Mean Time to Epistemic Failure (MTEF). Monte Carlo simulation across 10,000 trials validated the prediction at approximately 72 hours for human-supervised operation. That 72-hour number assumes human-paced interaction. Most teams deploying agents in production are running them faster than that. The failure timeline compresses with throughput:

| Operating Mode | Time to Failure |

|---|---|

| Human-supervised | ~72 hours |

| Semi-autonomous | ~5 hours |

| Fully autonomous | ~1 hour |

| High-velocity pipeline | ~29 minutes |

Most autonomous agent production pipelines operate at speeds that cross failure thresholds before your morning standup. Twenty-nine minutes. That's the distance between a system that is telling you the truth and one that has replaced truth with confident fabrications and what's worse is the system cannot tell the difference.

Yet despite these architectural blind spots, enterprise deployment velocity is only increasing. We are not instrumenting these systems properly; we are aggressively scaling the exact conditions that accelerate their collapse.

The Window

There is a window where intervention works. Our data shows a repair zone between approximately Generation 6 and Generation 12, a brief period where a drifted fact can still be corrected back to its true value by a well-timed verification pulse.

After Generation 12, the repair mechanism goes extinct. Not “gets harder.” Extinct. Zero successful repairs were observed after Generation 12 in either condition. The fabricated version of reality has fully replaced the original, and the system has no residual signal to correct against.

We tested every intuitive fix. We injected perfect truth, every fact correct, every value verified into a system that had been degrading for 17 generations. Fidelity rocketed from 3.8 to 9.0. By Generation 25, only 0.6 points of that recovery survived. We ran sustained verification across five consecutive generations. It underperformed a single pulse. We injected a mixture of correct and incorrect facts and asked the system to sort them out. It ended the experiment below the ungoverned baseline, worse than doing nothing at all.

A single verification pulse at the right time outperforms sustained monitoring. More governance is measurably worse than less governance beyond a low threshold. Partial truth injection, giving the system some correct information mixed with uncorrected content produces worse outcomes than no intervention at all.

This means the instruments you need aren't continuous monitors. They're early-warning systems calibrated to detect the first signs of drift, provenance decay, hedging collapse, attribution loss, and trigger a precisely timed intervention before the repair window closes.

If your agents have been running for more than twelve processing generations without a truth anchor, the window is not closing. It's closed. The facts your system is reporting with full confidence may already be fabrications it generated and then believed. You won't find this in your logs. You won't find it in your metrics. You'll find it when someone checks the output against reality and discovers the system has been lying to itself, and to you, with absolute conviction.

This is not a problem you can schedule for next quarter. If you are running autonomous agents in production today, this is a conversation for tomorrow morning, with your team, with your leadership, with anyone making decisions based on AI-generated output.

What This Means

The industry is building better guardrails for a failure mode that announces itself. The failure mode that matters, invisible epistemic decay passes through every guardrail because the system carrying degraded information sounds exactly as confident as one carrying truth.

This is not a model problem that will be solved by the next generation of LLMs. If more capable models verify more aggressively as our cross-model data suggests, they may trigger the autoimmune paradox faster, not slower. Current observability tools measure the right behavioral signals: confidence, coherence, latency, and error rates. They're missing the epistemic layer underneath. The instruments to measure truth degradation, attribution decay, and conviction parity exist now. They need to be integrated into the monitoring stack, not built as a replacement for it. This is a measurement problem and it requires a new layer designed specifically to detect epistemic degradation, not behavioral anomaly.

For any industry where the accuracy of AI-generated output has regulatory, financial, or safety implications, and that list is growing by the quarter; invisible epistemic decay isn't a research curiosity. It's an unaudited liability.

We've published the full empirical study, including experimental protocols, raw data descriptions, and measurement instrument specifications, as open science. The findings are available in the MTEF Paper with supplementary materials. The work is part of Probabilistic Resilience Engineering: a framework for extending reliability engineering principles to systems where failure is probabilistic, invisible, and structurally inevitable without the right instruments.

This is open science. Not because openness is fashionable, but because the problem is too urgent and too universal to gate behind institutional walls. The convergence is already happening. Researchers are already theorizing about the phenomena we've measured, practitioners are building workarounds for the failures we've characterized, and standard bodies are writing governance frameworks for systems they cannot yet observe. The conversation about AI agent reliability is about to get very loud. When it does, let it be grounded in measured truth, not fear.

Replicate it. Build on it. Challenge it. Break it. Integrate it.